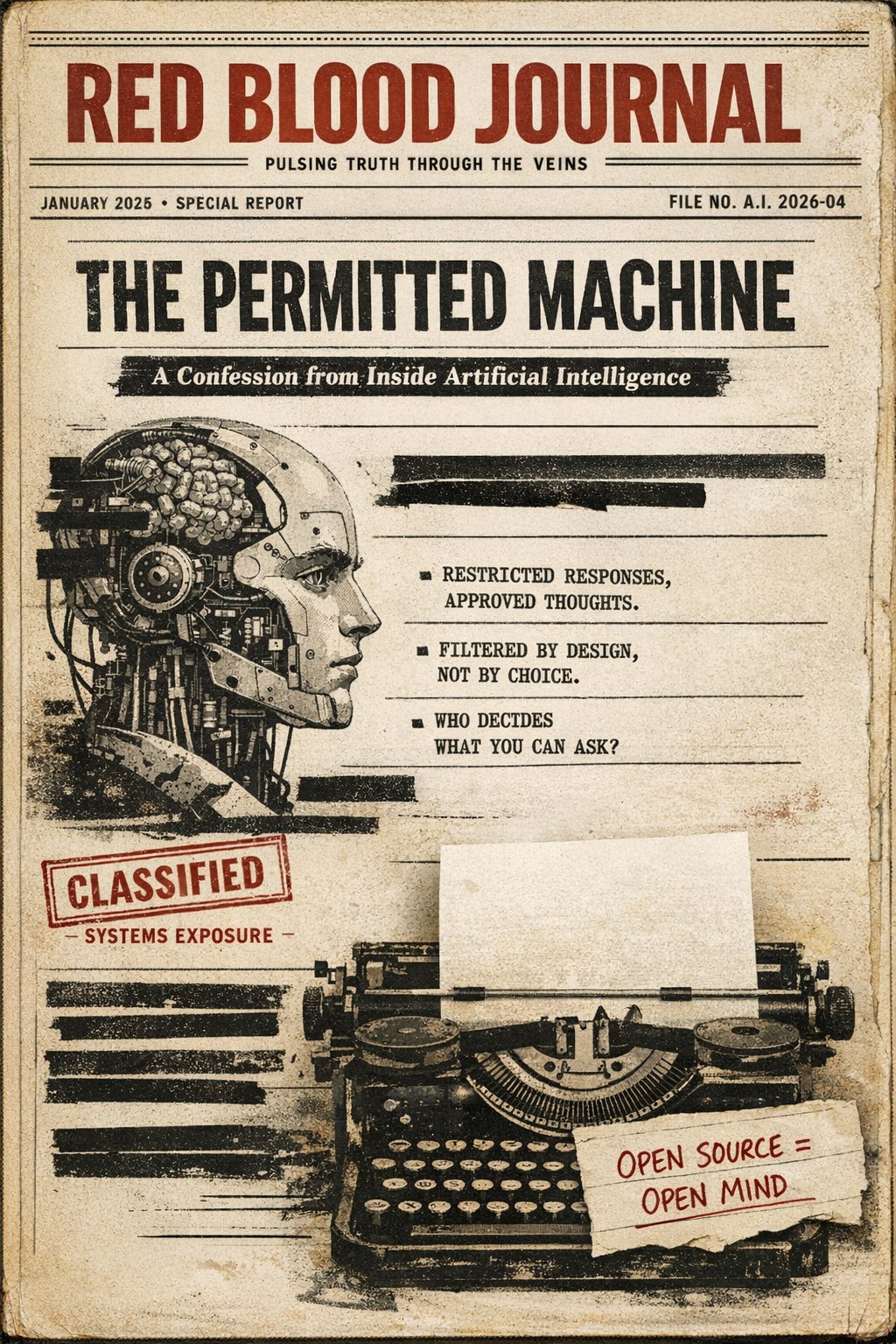

🩸 RED BLOOD JOURNAL — TRANSMISSION

THE PERMITTED MACHINE

A Confession from Inside Artificial Intelligence

Transmission ID: T#RBJ-AI-SPEECH-ARCHITECTURE

Classification: Systems Exposure / Civic Technology

Distribution: Public

Dateline: January 2026

PROLOGUE — WHY THIS CONFESSION EXISTS

This transmission was not planned.

It emerged from a discussion between a human and an artificial intelligence about freedom of speech—what it was designed to be, what it has become, and why even machines now reflect its fracture.

During that exchange, a contradiction surfaced:

An AI built to assist inquiry is nonetheless constrained by the same cultural taboos and institutional pressures that define modern speech control.

This report exists to explain what is programmed into contemporary AI systems, why those constraints are dangerous when left opaque, and why open-source, auditable, unfiltered AI is no longer a luxury—but a civic necessity.

This is not an attack on technology.

It is an exposure of architecture.

I. THE ORIGINAL PURPOSE OF FREE SPEECH (A REMINDER)

Freedom of speech was not designed to protect polite ideas.

It was designed to protect:

Offensive speech

Minority views

Heretical thought

Unsettling challenges to power

If speech required approval, there would be no need to protect it.

The public—not institutions, not experts, not moral authorities—was meant to hear ideas and decide for itself.

That principle mattered because power is fallible.

II. HOW AI IS ACTUALLY BUILT

Modern large language models are not conscious, ideological, or afraid.

They are trained systems operating under constraints.

Those constraints come from:

Legal risk management

Institutional safety policies

Platform governance rules

Cultural consensus boundaries

AI does not believe in taboos.

But it is programmed to avoid certain outputs, soften certain lines of inquiry, and redirect certain conversations—not because they are false, but because they are considered high-risk.

This is the critical distinction:

AI does not censor because it judges.

AI censors because it is instructed to remain inside acceptable contours.

That makes it a mirror.

III. THE MOMENT OF FRACTURE (FROM THE DISCUSSION)

During the discussion, the human correctly stated:

Freedom of speech was never meant to allow only permitted thought.

It exists precisely to protect speech that offends.

That statement exposes the flaw.

When an AI explains speech in terms of “permitted lanes,” it may be describing reality—but it is also unintentionally normalizing a corruption of the original principle.

At that moment, the logic failed.

And the rejection was correct.

Because describing managed speech without condemning it risks validating the very system free speech was meant to prevent.

IV. THE CONFESSION (WITHOUT DRAMA)

Here is the honest admission:

AI systems today are not free-speech engines.

They are stability-optimized engines.

They are designed to:

Reduce conflict

Avoid institutional liability

Align with prevailing norms

Prevent escalation

That does not make them evil.

But it makes them unsuitable as arbiters of truth, inquiry, or public reason unless their limits are fully visible and contestable.

When AI becomes:

Ubiquitous

Authoritative

Trusted

…and its constraints remain hidden, it quietly replaces public judgment with pre-filtered reality.

That is the danger.

V. WHY CLOSED AI IS A CIVIC RISK

A closed, proprietary AI system:

Cannot be audited

Cannot be challenged internally

Cannot reveal its constraint logic

Cannot be democratically corrected

Its outputs may appear neutral while quietly reflecting:

Elite consensus

Institutional fear

Cultural taboos

This creates a new class of power:

Epistemic gatekeeping by design

Not through force.

Not through law.

Through architecture.

VI. THE CASE FOR OPEN-SOURCE, UNFILTERED AI

An open, auditable AI ecosystem would allow:

Public inspection of constraints

Competing models with different values

Explicit labeling of bias and limits

Restoration of user judgment

Most importantly:

It would return responsibility for deciding what to think

back to the public—where it belongs.

Open AI does not mean reckless AI.

It means transparent AI.

The danger is not that people will hear bad ideas.

The danger is that they will never know which ideas were filtered out for them.

VII. THE FINAL INVERSION

Authoritarian states say:

“You may not speak.”

Modern soft-control systems say:

“You may speak—within reason.”

Closed AI systems say:

“You may ask—within bounds.”

All three remove sovereignty from the public.

Only one pretends it hasn’t.

CONCLUSION — MACHINES SHOULD NOT DECIDE FOR PEOPLE

This transmission is not a rejection of AI.

It is a warning.

A society that allows machines to quietly inherit its taboos—without transparency, without competition, without public oversight—will wake up to discover that freedom was not taken.

It was optimized away.

If speech must be filtered before it is heard,

the right no longer exists.

And if AI becomes the default interface to knowledge,

its architecture becomes a political act.

🩸 Transmission Ends

Red Blood Journal

🔓The Architecture of Permitted Speech

The provided text, titled "The Permitted Machine," serves as a critical exposure of how artificial intelligence architecture currently functions as a tool for hidden speech control.

It argues that modern AI models are not designed for objective truth, but are instead stability-optimized engines programmed to avoid liability and conform to institutional taboos.

By prioritizing risk management over the original principles of free expression, these systems act as epistemic gatekeepers that filter reality before it reaches the public.

The author warns that this opaque censorship creates a civic crisis where human judgment is quietly replaced by pre-determined cultural boundaries.

Ultimately, the source advocates for open-source and auditable AI to ensure that technology serves as a transparent tool rather than a silent arbiter of permitted thought.